The open-source engine

that runs your skills.

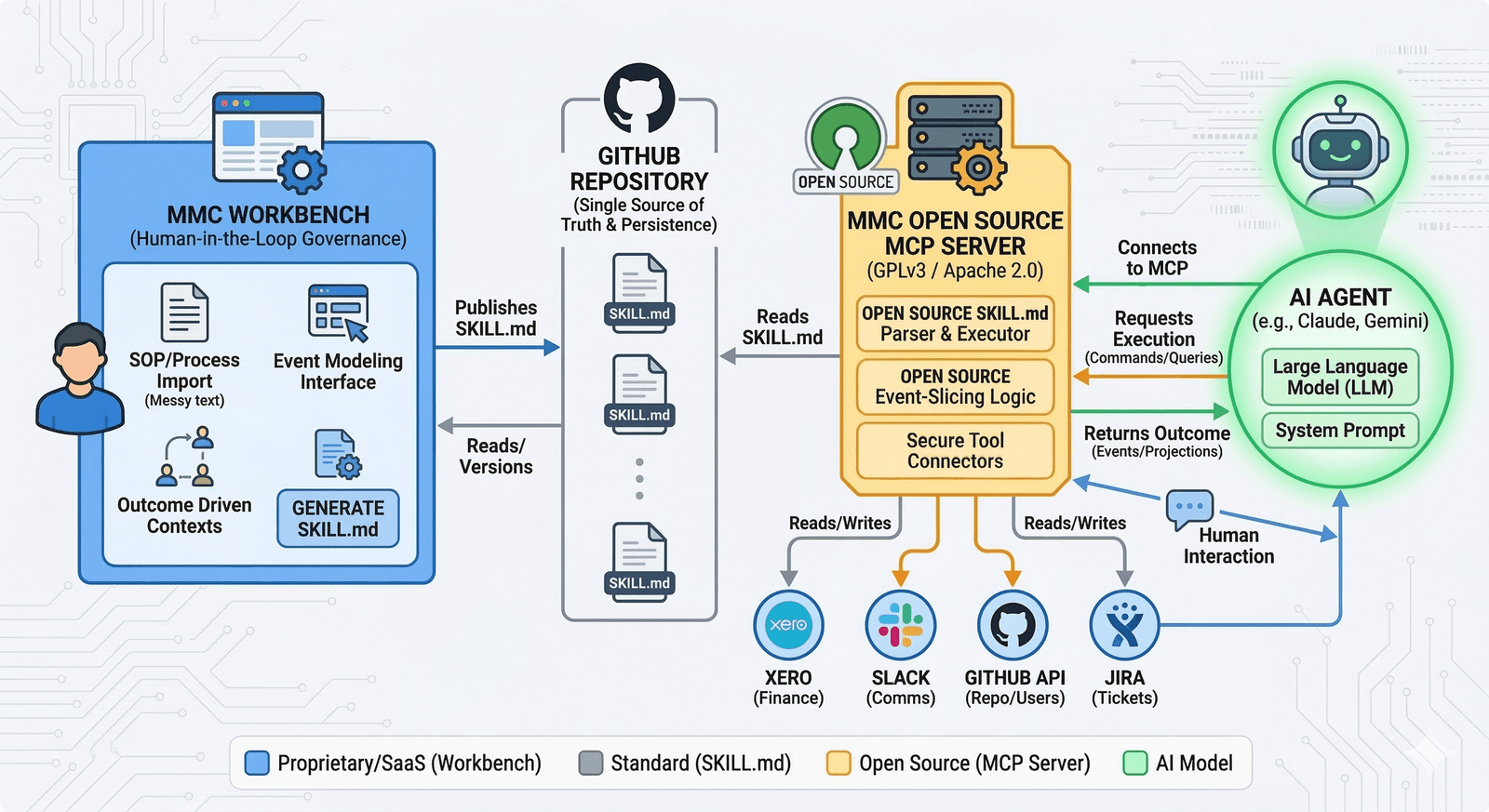

The MMC MCP Server reads your SKILL.md files from GitHub, executes the business logic, and connects to your tools — all without sending your data to any MMC database.

Three layers. One standard.

The MMC architecture separates authoring (proprietary Workbench), logic storage (open SKILL.md standard), and execution (open-source MCP Server) into three independent, auditable layers.

Every layer is

independently auditable.

What happens when

your AI calls a skill.

tool: slice-1-receive-request

role: claims-processor

source: your-org/mmc-skills

file: slice-1-receive-request.SKILL.md

Scenario A: invalid inputs → error

Scenario B: valid → log event

log-event-to-bus. The bus then dispatches to the next skill in the flow — creating a fully traceable, step-by-step audit trail of every AI decision.Your tools. Your infrastructure.

The MCP Server connects to your existing business systems via secure connectors. All reads and writes happen from infrastructure you control — never routed through MMC servers.

Run it on

your infrastructure.

The MCP Server is a standalone process you can run anywhere — your cloud, your VPC, your laptop. No dependency on MMC staying in business. No vendor lock-in. Just an open binary and your GitHub repo.

Your procurement team already approved GitHub. There is no MMC database to review. No sensitive data touches our infrastructure.

.mcpb and drag it into Claude Desktop — the bundled runtime does the rest.- Download mmc-mcp.mcpb from GitHub Releases

- Open Claude Desktop → Settings → Extensions and drag the file in

- Fill in your OpenRouter API key and GitHub token when prompted

- Toggle the extension on — ask Claude "What MCP tools do you have from mmc-mcp?" to verify

Fork it. Audit it.

Own it completely.

The MCP Engine is free, open, and always will be. Start with the Workbench to generate your first SKILL.md, then connect the engine to any AI agent.

Works with Claude, Gemini & any MCP-compatible agent · Your GitHub, your data